A Complexity-Coherent Approach to Suicide Intervention

A review of the ASIST suicide-intervention model

This week I had the opportunity to attend a suicide intervention course offered by our Air Force Academy HQ First Sergeant and the Senior Enlisted Leader of the Religious Affairs Office. The approach we were trained in, called Applied Suicide Intervention Skills Training (ASIST), comes from an organization called LivingWorks, which certifies trainers to facilitate their developed course content and provides materials at a cost for all trainings. I've had my share of contact with the pervasive threat of suicidality, having lost a few colleagues to suicide and known a lot more who endured ideation, so it felt logical to attend the course. I would love for my next attempt at intervention to have a higher chance of success. I am also deeply interested in approaches to solving complex problems, so was curious to explore the ASIST framework. The organization and their methods have been around for four decades at this point and make assurances that their program is evidence-based and always evolving in response to continued research and efficacy-monitoring of the methods as employed in different domains. On their website, LivingWorks provides links to peer-reviewed studies and reports that claim to demonstrate impact.

I should note that I am a skeptical person, particularly of scaled institutional solutions to complex problems, so I attended this course with a significant degree of skepticism. I walked away at the end of the 2-day training convinced that ASIST is a strong and effective toolkit for addressing the problem of suicide, specifically in how it is coherent with the degree of complexity one faces in this domain. I'd like to explain in this article what I mean by that.

There is hardly a domain as difficult to read, much less forecast, as the human emotional landscape. It is highly complex in that there are countless visible and invisible interconnected factors that give rise to the emotional state that a person might be experiencing for a particular moment or period. The simple removal of factors is often not enough to resolve the emotional state. It might persist. It might seem completely incongruent with situation or context. An experience for one person might result in mere frustration while that same experience for another might lead to complete emotional devastation. Therapeutic approaches that work for large numbers of people might make the problem worse for others. Therapeutic approaches that work for a period might stop working entirely for no apparent reason. As evidence, just spend a little time looking at reviews of psychoactive medications. Here are a few quotes from different reviewers on the very popular anti-depressant Welbutrin:

"This medication makes jokes funny, trees prettier, and makes me feel emotionally stronger."

"...I began to hallucinate... I started seeing things that weren’t there and having really dark thoughts. This drug took over my life and caused me to make irrational decisions like up and moving 1200 miles across country for no apparent reason whatsoever."

"Wellbutrin has changed my life! I was such a terrible, irrational, and very depressed person before this medication."

"Experienced severe paranoia with psychosis (spiders all over walls, painful bug-crawling sensation under skin, auditory hallucinations)."

"It actually made me feel worse and more depressed. I didn’t realize it was the reason for being so rude and aggressive for no reason until it became very clear!"

Because of our innate and learned habits of linear, mechanistic thinking, we have a tendency to think we can pin down complex things to:

A limited set of identifiable causal mechanisms

Clear, repeatable methods for detection, such as signals which indicate its presence or absence

Singular, fixed therapeutic approaches which, if they work once, will continue to work every time

Linear, mechanistic thinking also lends itself to scaled solutions within institutions. As an example, the Air Force has historically taught us to deal with suicidality using the ACE model. ACE stands for "Ask, Care, Escort", and it goes a little something like this:

Ask if people are suicidal when you suspect it.

Be empathetic to their feelings.

Immediately escort them to an expert who is qualified to handle suicidal people (Emergency Room, Mental Health clinic, etc...)

This is very clearly an ordered solution to a complex problem. It offers a constrained set of steps which, if followed without deviation every time, might actually result in worse outcomes. This might be apparent to anyone who has had any contact with people who experience occasional or regular suicidality, who have entered and exited suicidal feelings a number of times and might not appreciate being dragged to the ER or getting authorities involved. Or to anyone who has ever thought about how trust is gained or lost in how you respond to being told sensitive information. I often have interactions where someone tells me something in confidence that I think should probably be acted on, and then I don't act on it because they don't consent to me taking action when I ask "Do you mind if I [take action]?". There are a limited number of circumstances when I will act unilaterally on privately shared information, because my relationship with that person going forward might be irreparably damaged by me using their voluntary sharing to create effects that they did not volunteer for or believe will be helpful. Whether they share information with me in the future depends on my relationship with them, so in most circumstances impact to that relationship factors heavily in whether and how I respond in the moment. It is more important that I continue to be an effective sensor and support for them than that I respond with severity this time. The next time a person is feeling suicidal or going through some other severe and sensitive difficulty, they might simply not share with me because they did not appreciate how I responded last time. But even without this very specific perspective on the primacy of relationships, I know that the ACE model is probably not the ideal approach because of what I learned about problem-solving in complexity from the Cynefin framework.

How to Approach Complexity

My favorite resource on how to operate in complexity is Dave Snowden's Cynefin framework. If you're not already familiar, I did a Cynefin explainer on Youtube.

One purpose of Snowden's framework is to guide the decision-making heuristics that leaders apply in different contexts based on whether they fall into the clear, complicated, complex, or chaotic domain.

The clear and complicated domains are considered “ordered domains” because cause and effect are discernible in advance; while the other two domains are “unordered”—in complexity, causality can only be determined in retrospect (often incorrectly); and in chaos, it might not be identifiable at all.

In the "clear" domain, causality is obvious to all or easily discernible. One need only determine what category the problem fits into and can respond with the application of best practice for that category. They sense, categorize, then respond. This is the domain we’re most comfortable in, because the level of constraint ostensibly gives us the greatest degree of control, so we really like to use categorization to simplify problem-sets.

In the complicated domain, those with expertise can determine causality and anticipate the effects of inputs through analysis; thus the decision-making process follows the steps of 'sense, analyze, respond'. In this domain, some degree of flexibility must exist in how the expert responds, so it is the domain of ‘good practice’ rather than ‘best practice’. We can think of a lot of medical practice as fitting into this domain, because diagnosis and effective therapeutic approaches can be achieved most of the time with sufficient expertise, and their analysis can often extend beyond mere categorical checklists.

The complex domain is unique from the simple and complicated, in that it is un-ordered, meaning that systems don't respond in a purely predictable manner; response must pivot off of timely feedback showing conditions and patterns at the present moment (future states can not be predicted). The decision-making steps are "probe, sense, respond". In the complex domain, ground-level leaders may not allow previously observed patterns to bias their decision-making. What worked before might not work again, because the system and its complex configuration are always evolving. So we must err on the side of testing decisions through small experiments against our dynamic, unpredictable context. We probe the present state to sense what response is warranted. The complex domain is driven by "unknown unknowns", thus at any point, the rules and patterns a leader has identified (and based decisions off of) may simply cease to apply. Our only hope for maintaining control within complexity is to exercise agility, responsive to continuously sensed-out signals of dynamical shifts through regular probes. This is the domain of culture—we might probe and experiment our way to nudge the culture into very positive patterns, only to discover that the system evolves into a new configuration, based on myriad factors, that renders it impervious to our previous interventions. I suggest that complex is the domain of most psychological and emotional crises.

The chaotic domain represents a crisis state which lacks usable or exploitable patterns and constraints. The intervention recommended in this domain is “to set draconian constraints” to try and move the system (or at least parts of it) into more stable patterns.

Our efforts at establishing scalable solutions to cope with servicemember suicide and mental health crises have historically been undermined by a tendency to apply solutions better suited for simpler domains. As stated above, this tendency stems from innate and learned thinking habits (we have a hammer (experts and processes), therefore this problem looks like a nail), but it’s also because simple things are easier to scale. The example I shared above, of the Ask, Care, Escort model, illustrates the mismatch I’m talking about. The "Ask" step serves to sense what category of situation you're facing ("suicidal" or "not suicidal"). The Care and Escort protocols are rigid, non-negotiable, nonadaptive responses to the "suicidal" category. These are clearly the heuristics we should employ in the "Clear" domain (Sense, Categorize, Respond). As described above, the misapplication of clear-domain heuristics to a complex domain problem like this can often result in catastrophic failure—loss of trust and therefore a shift in the dynamics which previously facilitated the intervener’s ability to ever know when someone was at risk of harming themselves. In complexity, relationships are key.

Ordered systems with categorical constraints bear significant responsibility for inhibiting servicemembers from seeking help. The system responds rigidly to help-seeking behaviors which means a lot of people don't seek help. A big part of what facilitates a sense of safety in sharing sensitive information is knowing that the response to that information will not be rigid, categorical, and predetermined. If you want to maximize my sense of safety and therefore my propensity for sharing, when I share sensitive information, I should have final say in how that information is used.

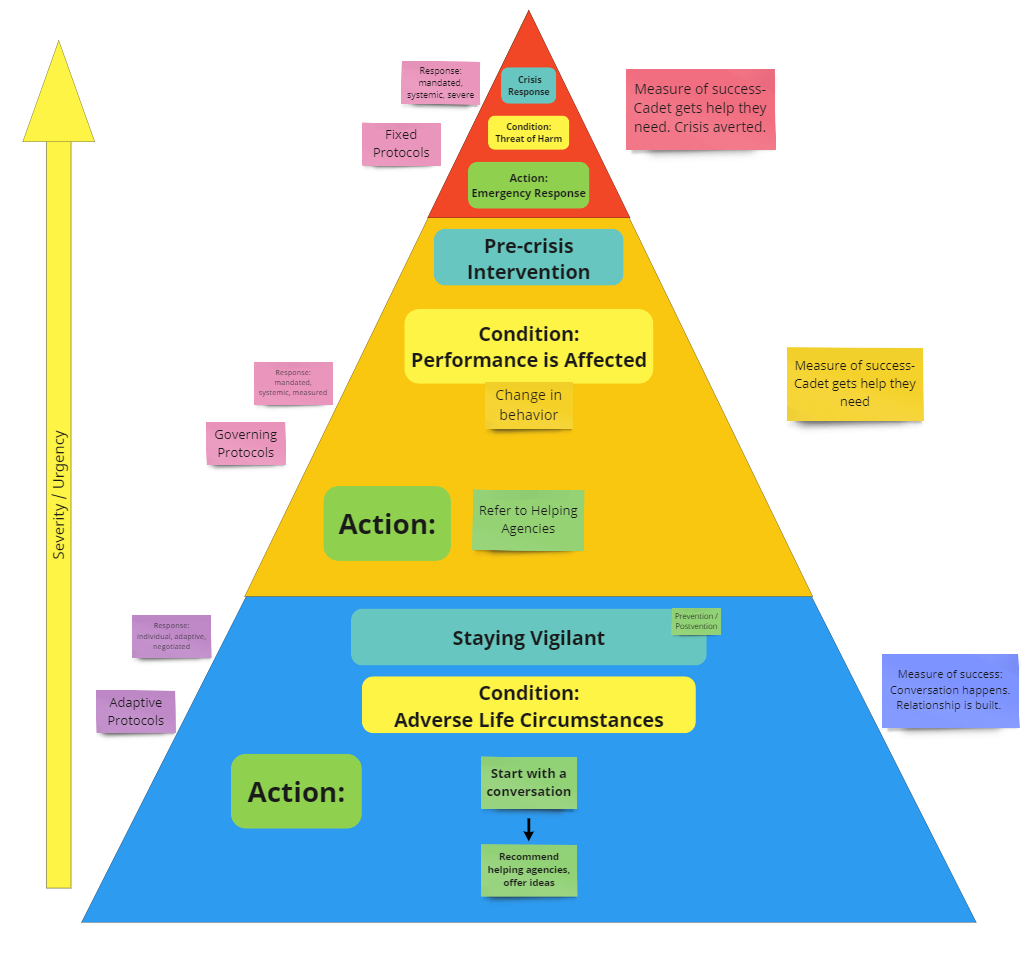

A few years ago, I employed Cynefin as a primary lens to build out a crisis response framework for the Air Force Academy Dean of Faculty. The purpose of this tool was to help guide faculty members’ decision-making in responding to a person in crisis, particularly in responding to cadets, who often approach their professors with personal struggles ranging from simply painful to life-altering and catastrophic.

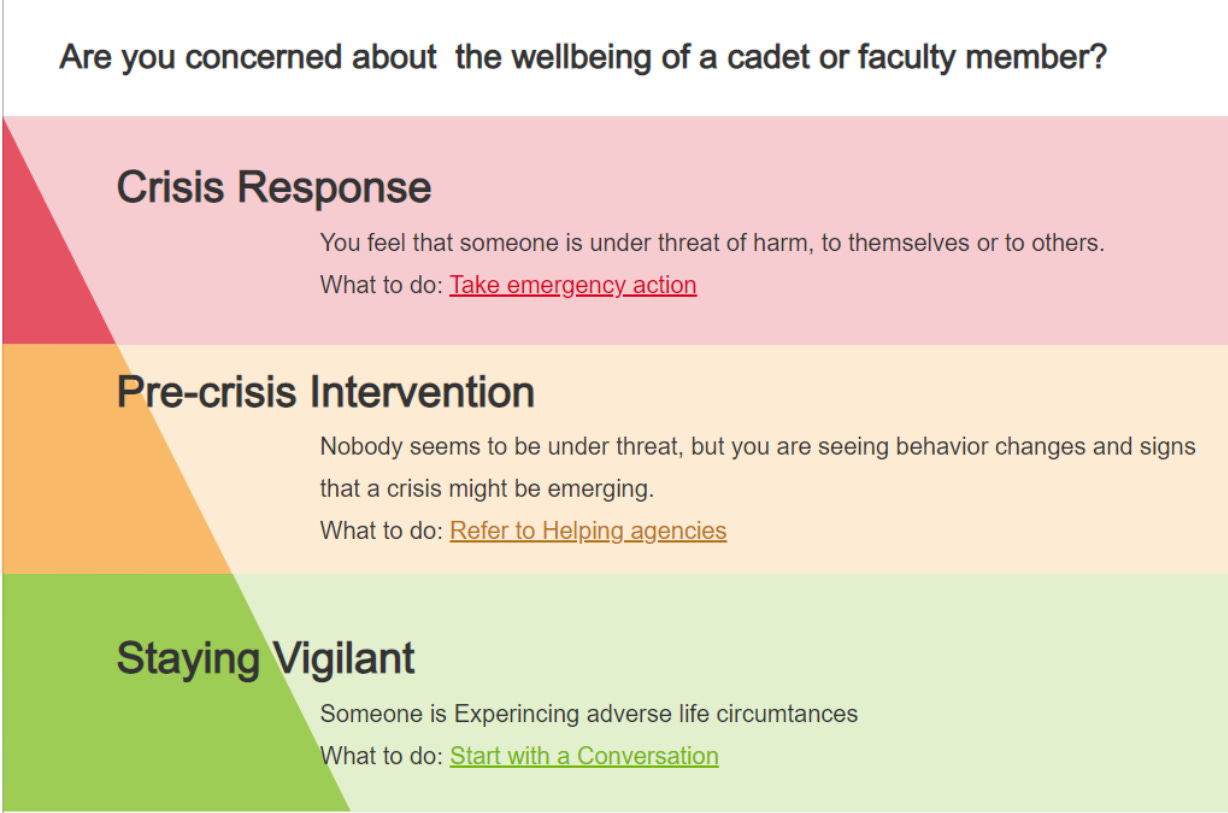

Here is a simple depiction of what I came up with, in the form of a splash page for an application.

This simple little app guided users to resource pages for conversation guides (green), a database of helping agencies (yellow), and checklists of specific emergency actions (red). It was based on the following depiction I came up with for when what kinds of constraints and protocols were helpful and not harmful in cadet crisis response:

The purpose of this whole endeavor was to guide faculty to the least restrictive course of action where appropriate, because unnecessarily imposed constraints in complexity limit adaptive capacity (which is highly necessary in complexity), can harm relationship (the basis for positive patterns in complexity), and might make matters worse. For a majority of cases, the behavior we wanted from professors was to engage in complex conversation—to sense out details and build relationship, to nudge the relational system between them and students into one with greater capacity for current and future response. If they detected higher urgency information in the course of that conversation, they could move up the pyramid into something like referring to helping agencies.

This is of course a wildly simplistic approach (all models are wrong). We simply didn’t want a cadet who went to a person they trust to be pushed off immediately to someone they didn’t know at some helping agency. That could inhibit trust and inhibit further help-seeking. We definitely didn’t want professors activating emergency responses for non-urgent circumstances. Most of the time, what a distressed person needs is a listening ear and some help sensemaking—not an appointment with a stranger in a few weeks. The conversation is important, and if we could build this type of caretaking conversation as a common practice, the network grows healthier, increasing overall capacity for crisis sensing and response.

Another student at the ASIST training I attended was a USAFA professor. I asked him whether he had ever employed the application we developed. He said he might have heard about it once when he first arrived but wasn’t sure where to find it.

Application design and adoption are also complex issues… The primary constraint that prevented professors from engaging in that sensemaking conversation with students might still exist, and it might actually be more effectively addressed by a program like ASIST.

At this point, I’d like to share what I particularly liked about ASIST training as a model for suicide intervention, much of which is informed by the principles and theories explored above.

The first thing I really appreciated is that the training did not tell me to immediately call the police or rush to the ER every time anybody said they were suicidal. As an approach is it complex, not rigidly prescriptive. Put in the language of Cynefin, the method employs primarily enabling constraints rather than governing or fixed constraints. It enables adaptive response by the caretaker based on what they hear over the course of a loosely-guided conversation, with clear checkpoints and guidance on when to shift into more prescriptive or constrained approaches.

Different circumstances require different degrees of constraint. Maximizing autonomy for the person in distress and ensuring they buy in to (or even drive) whatever happens next is massively enabling, unless there is an immanent threat of harm to self or others.

ASIST incorporates awareness of:

When to impose significant constraints against the will of the person at risk (I’m doing these things whether you like it or not)

When to impose minimal constraints (I’m making sure we do this, but we’re doing it together and with your input)

When to impose almost no constraints (I’d prefer if you do these things, but it’s entirely up to you).

Sharing of sensitive information (like feelings of suicidality) is often relationship-based, and one doesn’t have the right relationship just because they hold some role (leader, first sergeant, supervisor, counselor, etc.) So ASIST equips people at every level to serve as sensors of the information we’re looking for (signals of suicidality). You do not need specialized knowledge or skills or position to be equipped to be a counselor under this model. It is useful for literally anyone, and thus increases the capacity of the entire network to probe, sense, and respond appropriately.

One final thing that made ASIST training excellent in my opinion was the choice to dedicate a significant portion of class-time to role-play. No amount of knowledge about clean frameworks and models can truly equip a person to operate in the messy reality of difficult conversations. I think it was Brené Brown’s writing that first convinced me of the usefulness of role-play as a training tool for leadership. It is always a little corny and always deeply awkward, which gives participants a visceral sense of just how unclear it can be where you are and where you need to go next. Almost the entirety of day 2 we spent practicing employing the tools and guidance they had introduced on day 1 and watching other students practice in front of the group. This practice and watching others really hammered home the principles and steps while solidifying the importance of staying present and aware when deviation from the presented framework might be necessary, or when you had to slow down and stay in a phase to make sure you were gathering and responding to the information.

I am by no means suggesting that this training lacks flaws. I can imagine plenty of ways in which it might fail to save a life, but I think that’s going to be the case with any approach to a problem as complex as this. I found myself particularly curious about the research that led them to specific elements, but I became more convinced throughout the course and thinking about it afterwards that theirs is likely an approach that does a good job of balancing efficacy with accessibility, ensuring one doesn’t have to have significant training (beyond their 2-day course) or experience in order to employ the model.

The reason I decided to share this writeup after taking the training is that a few hours into day 1, I suddenly remembered that I had written about this exact thing 5 years ago, informed by my personal studies of leadership, complexity, Cynefin, and group facilitation, and my regular reflections on our institutional approaches to this complex issue. I reviewed that unpublished essay as I wrote this and adapted a lot of it to share here. That essay expressed that, based on my understanding of complex systems, the most effective approach to the complex problem of suicide in the Air Force necessarily involved equipping a large number of diverse agents across the organization to sense weak signals within their proximity, to engage in probative conversations looking for patterns that required intervention, and to adaptively respond to the patterns detected.

Five years later, I found myself in this course which seems to do exactly that, and I think we’d be better off basically everywhere, in more domains than just the problem of suicide, if more people attended this course and learned to apply these practices.

Looks like a good course. As you said, all humans would benefit from that complexity informed approach.